How We Created and Cross-Checked ~20,000 Engineering Models

How We Created and Cross-Checked ~20,000 Engineering Models

Engineering knowledge is scattered across textbooks, journal papers, (internal) wikis, and the heads of experienced engineers who have been doing it for decades. When an engineer needs to model a heat exchanger, a crystallization process, or a photovoltaic cell, they typically start from scratch, hunting down equations, re-implementing them in whatever tool they have at hand, and hoping nothing was transcribed incorrectly.

We asked ourselves: what if we could systematically extract that knowledge and make it available as ready-to-run, documented, cross-checked models?

The result is a library of ~20,000 engineering calculator models on engicloud.ai. Here is how we built it, and how we made sure the models are actually correct and non-redundant.

The benefit is clear: as LLMs make it easier and easier to create code, the challenge shifts to verification and validation

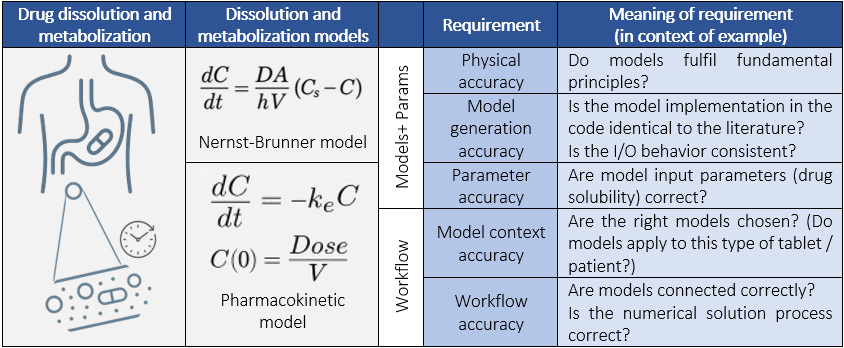

In order to allow Verification & Validation in the context of modeling for science and engineering, we need to employ structures that allow to "divide and conquer" and break the challenge down into smaller, manageable elements. This also helps to reduce hallucination rate (Dhuliawala et al - [1]).

Starting from Structure: Fields, Subfields, and Tasks

The first challenge was scope, as engineering is vast. We needed a principled way to carve it up without missing large swaths of knowledge or generating pointless duplicates right from the start.

We started with fields, 20 major engineering disciplines in total, covering everything from Mechanical and Chemical to Aerospace, Biomedical, Petroleum, and Marine Engineering. Each field represents a distinct professional community with its own vocabulary and canonical equations.

Each field was then broken into subfields, the specialist areas within a discipline. Mechanical Engineering splits into Fluid Mechanics, Heat Transfer, Thermodynamics, Tribology, and others; Chemical Engineering into Process Engineering, Reaction Engineering, Transport Phenomena, and more. Subfields matter because the same physical concept (say, pressure drop) is approached differently depending on context. A packed bed in a chemical reactor is not the same problem as a pipe network in a building.

Finally, each subfield was decomposed into calculation tasks, the concrete things an engineer actually sits down to compute. This is where the taxonomy connects to real work: not "Fluid Mechanics" as a concept, but "pressure drop in a packed bed", "pipe friction factor in turbulent flow", "reaction rate in a CSTR", or "heat exchanger duty for a two-phase stream".

We generated this taxonomy guided by standard engineering curricula and textbook chapter structures, producing 20 fields, 110 subfields, and 3,788 calculation tasks, the seeds from which all equations were grown.

Generating Equations at Scale

With thousands of calculation tasks in hand, the next step was to ask: for each task, what equations does an engineer need?

For every task in every subfield, we prompted an LLM to generate the set of equations an engineer would reach for. Each equation consists of the following elements

- Name: the canonical name an engineer would expect to find it under, making it immediately recognisable and searchable

- Type: whether the equation is algebraic, a first-order ODE, or a second-order ODE

- Formula: the governing equation in standard mathematical notation

- Inputs and outputs: what the model needs to run and what it produces, with physical units

- Description: a plain-language summary of what the equation computes and when it applies

Each task typically produced several equations such as governing laws, supporting correlations or boundary conditions. This is why thousands of tasks scaled up to roughly 90,000 equation records. Of these, around 60,000 were algebraic equations; the remainder were differential equations which were not yet supported by our platform at the time. Generating across so many overlapping fields and subfields also meant a significant number of duplicated equations, which we would need to deal with during content generation.

Content Generation: Python First, Platform Format Second

For each algebraic equation we generated a Python implementation. Python was the intermediate step because it is easier for an LLM to produce correct, testable code in Python before translating to our platform's backend format which requires typed input and output ports, parameter annotations, and structured documentation.

The transformation from Python to platform format was itself a multi-stage LLM pipeline:

- Code generation: raw implementation in the platform format, directly from the equation definition

- Validation and cleanup: syntax checking and first-pass corrections

- Parameter resolution: mapping variable names to physical quantities with correct units

- Documentation enrichment: adding context, cleaning up the theory section, ensuring the model is self-describing

- Metadata normalisation: final pass to align naming, structure, and search metadata across all models

Each stage could be rerun independently, which meant a bug or quality issue found late in the pipeline did not require regenerating everything from scratch.

Cross-Checking: Embedding-Based Duplicate Detection

Engineering fields overlap heavily. The same equation appears under different tasks, in different subfields, slightly reworded but fundamentally identical. Some examples: the Nusselt number correlation is, amongst others, relevant for force convection, heat exchanger design or reaction cooling. The Arrhenius equation can be found in applications ranging from reaction engineering to material science and biochemical engineering. Hook's law is used in structural engineering, materials science or when mechanical vibrations are calculated. Ohm's law will be found in electrical engineering, power systems or biomedical instrumentation.

Not considering this would lead to many duplicates in the database, many with slightly deviating names, making it hard for the user to select the model they actually want. In our workflow deduplication was woven into the content generation process itself and not a separate cleanup step at the end. As models were generated and documented, we continuously embedded each one along four semantic dimensions:

- Name: what the model is called

- Context: when and where an engineer would use it

- Theory: the underlying physical or mathematical background

- Usage: practical guidance on inputs, outputs, and assumptions

High similarity across these dimensions flagged candidate duplicate groups. An LLM then reviewed each group, partitioning the candidates into clusters and selecting the best representative from each. It was instructed that equations covering meaningfully different regimes or assumptions were not duplicates even if they looked similar on the surface. The canonical version of each cluster was kept; the rest were resolved out.

This reduced the roughly 40,000 generated models down to the ~20,000 unique, documented models in the library today.

Validation by Experts

During generation, we first verified that all ~20,000 models execute without errors (correct syntax, valid inputs, and no runtime failures), giving us confidence that the pipeline had produced well-formed, runnable models across the board. However, automated checks alone cannot replace an engineer running a model and checking whether the result makes sense.

Manual review of every model is not feasible at this scale, so we validated a representative sample of around 100 models. This was done as part of platform testing, where our internal engineers used the models to build workflows, checking outputs against expected results and confirming that the documented behaviour matched what the model actually computed. This targeted review covered a spread of fields and subfields, giving us a representative signal on output correctness before the platform goes live.

Dealing with Hallucinations

Hallucination in this context is concrete: equations with the wrong functional form, incorrect unit assignments, or references to papers that do not exist.

The main mitigation was structural. Rather than generating complete, documented models in a single prompt, we decomposed each task to the smallest unit an LLM can handle reliably, always one equation at a time, with name, formula, inputs, and outputs specified separately. This mirrors the Chain-of-Verification approach [1]: finer-grained steps mean errors stay local and are easier to catch. The multi-stage pipeline reinforced this and each stage could flag and correct problems before they reached the next.

The deduplication step also acted as an indirect check: models that drifted semantically toward a neighbour often indicated a hallucinated paraphrase rather than a grounded equation. Resolving against a canonical version surfaced these cases.

Finally, citations attached to each model provide a user-facing check.

What Every Model Comes With

A runnable equation is only half the picture. The harder question is whether this equation is the right one for your problem. That requires context that a formula alone cannot provide.

Every model in the library includes:

- Context : where in an engineering workflow you would reach for this model, what problem it solves, and what assumptions it relies on. This is what helps you decide before you run it.

- Theory: the physical and mathematical background behind the equation, with clean, correctly formatted notation. Useful when you need to understand what the model is actually doing, not just what it returns.

- Usage: practical guidance on applying the model: how to interpret the outputs, what the valid input ranges are, and where the model breaks down.

This matters because the most common mistake in engineering calculation is not a coding error but it is applying a correct equation in the wrong regime. A model for laminar pipe flow gives you a number even when your flow is turbulent; without the context to know that, you would never question it.

Having this documentation attached to every model is also what makes search useful. It is not just a label but searchable, structured knowledge that connects a user's problem description to the right model, even when they do not know the equation's name.

Each model's documentation also includes citations and references wherever possible. For experts, this means links to canonical publications (papers, textbooks, and conference proceedings), with [DOI](https://www.doi.org/) links for more recent work. For non-specialists, we link to [Wikipedia](https://www.wikipedia.org/) as a more accessible starting point. In both cases, the goal is the same: users should be able to verify what the model is doing and learn more if they want to.

The Numbers

Starting from 90,000 raw equations and cutting to 20,000 final models, each documented, typed, and ready to run.

What This Enables

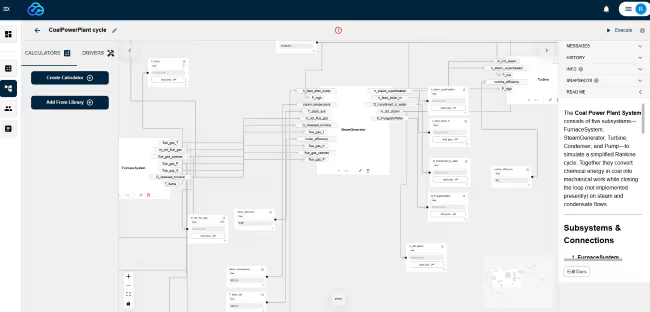

Instead of hunting down equations, checking unit consistency, and re-implementing the same formula for the tenth time, engineers can go straight to running a calculation. The library spans heat and mass transfer, fluid dynamics, reaction kinetics, thermodynamics, structural mechanics, electrochemistry, and more. Each model plug-and-play, documented, and tagged with the field and subfield it belongs to.

In the next article, we will look at how we built the semantic search layer that makes it practical to navigate this library of 20,000 models, finding the right one even when you do not know exactly what it is called.

---

[1] Dhuliawala, S., Komeili, M., Xu, J., Raileanu, R., Li, X., Celikyilmaz, A., & Weston, J. (2024). Chain-of-Verification Reduces Hallucination in Large Language Models. *Findings of the Association for Computational Linguistics: ACL 2024*, 3563–3578.

Sign-Up Now

We will be launching the engicloud.ai platform in Q1 2026.

Sign-Up to be the first to know when we go Live and get your

Launch Special Coupon Code (pssssst it's 20% Off).

We will also send you updates along the way, so you don't miss a beat.